Longhorn,企業級云原生容器分布式存儲之監控

2022-10-13 分類: 網站建設

設置 Prometheus 和 Grafana 來監控 Longhorn

概覽Longhorn 在 REST 端點 http://LONGHORN_MANAGER_IP:PORT/metrics 上以 Prometheus 文本格式原生公開指標。有關所有可用指標的說明,請參閱 Longhorn's metrics。您可以使用 Prometheus, Graphite, Telegraf 等任何收集工具來抓取這些指標,然后通過 Grafana 等工具將收集到的數據可視化。

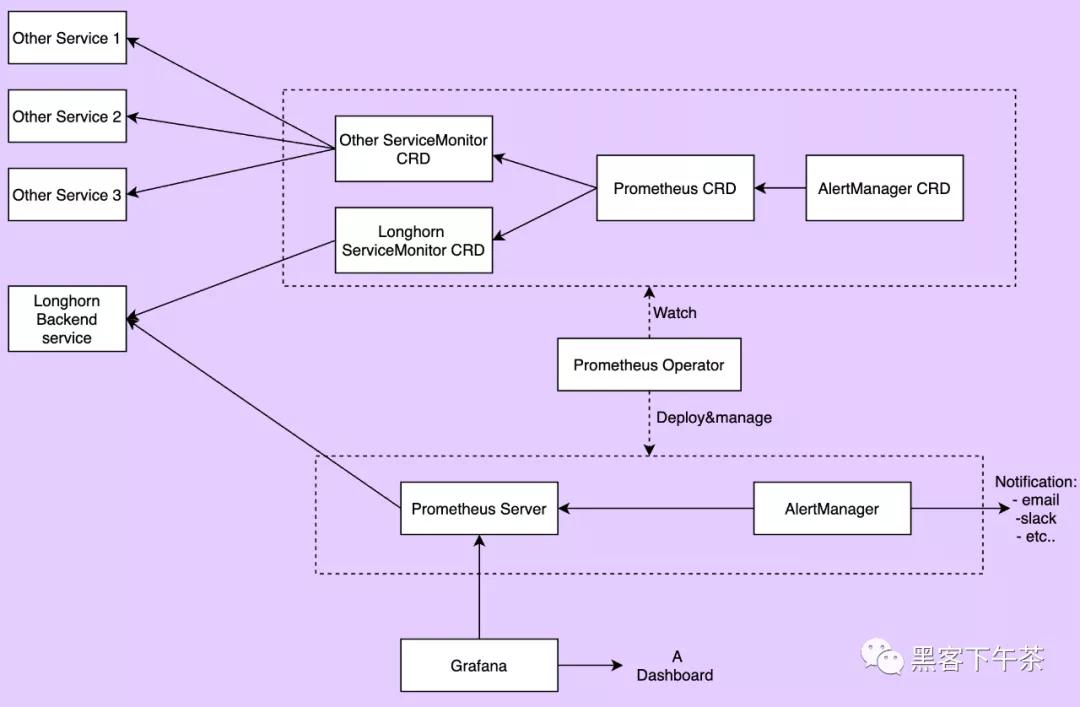

本文檔提供了一個監控 Longhorn 的示例設置。監控系統使用 Prometheus 收集數據和警報,使用 Grafana 將收集的數據可視化/儀表板(visualizing/dashboarding)。高級概述來看,監控系統包含:

Prometheus 服務器從 Longhorn 指標端點抓取和存儲時間序列數據。Prometheus 還負責根據配置的規則和收集的數據生成警報。Prometheus 服務器然后將警報發送到 Alertmanager。 AlertManager 然后管理這些警報(alerts),包括靜默(silencing)、抑制(inhibition)、聚合(aggregation)和通過電子郵件、呼叫通知系統和聊天平臺等方法發送通知。 Grafana 向 Prometheus 服務器查詢數據并繪制儀表板進行可視化。下圖描述了監控系統的詳細架構。

上圖中有 2 個未提及的組件:

Longhorn 后端服務是指向 Longhorn manager pods 集的服務。Longhorn 的指標在端點 http://LONGHORN_MANAGER_IP:PORT/metrics 的 Longhorn manager pods 中公開。 Prometheus operator 使在 Kubernetes 上運行 Prometheus 變得非常容易。operator 監視 3 個自定義資源:ServiceMonitor、Prometheus 和 AlertManager。當用戶創建這些自定義資源時,Prometheus Operator 會使用用戶指定的配置部署和管理 Prometheus server, AlerManager。安裝

按照此說明將所有組件安裝到 monitoring 命名空間中。要將它們安裝到不同的命名空間中,請更改字段 namespace: OTHER_NAMESPACE

創建 monitoring 命名空間

apiVersion: v1 kind: Namespace metadata: name: monitoring安裝 Prometheus Operator

部署 Prometheus Operator 及其所需的 ClusterRole、ClusterRoleBinding 和 Service Account。

apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: labels: app.kubernetes.io/component: controller app.kubernetes.io/name: prometheus-operator app.kubernetes.io/version: v0.38.3 name: prometheus-operator namespace: monitoring roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: prometheus-operator subjects: - kind: ServiceAccount name: prometheus-operator namespace: monitoring --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: labels: app.kubernetes.io/component: controller app.kubernetes.io/name: prometheus-operator app.kubernetes.io/version: v0.38.3 name: prometheus-operator namespace: monitoring rules: - apiGroups: - apiextensions.k8s.io resources: - customresourcedefinitions verbs: - create - apiGroups: - apiextensions.k8s.io resourceNames: - alertmanagers.monitoring.coreos.com - podmonitors.monitoring.coreos.com - prometheuses.monitoring.coreos.com - prometheusrules.monitoring.coreos.com - servicemonitors.monitoring.coreos.com - thanosrulers.monitoring.coreos.com resources: - customresourcedefinitions verbs: - get - update - apiGroups: - monitoring.coreos.com resources: - alertmanagers - alertmanagers/finalizers - prometheuses - prometheuses/finalizers - thanosrulers - thanosrulers/finalizers - servicemonitors - podmonitors - prometheusrules verbs: - '*' - apiGroups: - apps resources: - statefulsets verbs: - '*' - apiGroups: - "" resources: - configmaps - secrets verbs: - '*' - apiGroups: - "" resources: - pods verbs: - list - delete - apiGroups: - "" resources: - services - services/finalizers - endpoints verbs: - get - create - update - delete - apiGroups: - "" resources: - nodes verbs: - list - watch - apiGroups: - "" resources: - namespaces verbs: - get - list - watch --- apiVersion: apps/v1 kind: Deployment metadata: labels: app.kubernetes.io/component: controller app.kubernetes.io/name: prometheus-operator app.kubernetes.io/version: v0.38.3 name: prometheus-operator namespace: monitoring spec: replicas: 1 selector: matchLabels: app.kubernetes.io/component: controller app.kubernetes.io/name: prometheus-operator template: metadata: labels: app.kubernetes.io/component: controller app.kubernetes.io/name: prometheus-operator app.kubernetes.io/version: v0.38.3 spec: containers: - args: - --kubelet-service=kube-system/kubelet - --logtostderr=true - --config-reloader-image=jimmidyson/configmap-reload:v0.3.0 - --prometheus-config-reloader=quay.io/prometheus-operator/prometheus-config-reloader:v0.38.3 image: quay.io/prometheus-operator/prometheus-operator:v0.38.3 name: prometheus-operator ports: - containerPort: 8080 name: http resources: limits: cpu: 200m memory: 200Mi requests: cpu: 100m memory: 100Mi securityContext: allowPrivilegeEscalation: false nodeSelector: beta.kubernetes.io/os: linux securityContext: runAsNonRoot: true runAsUser: 65534 serviceAccountName: prometheus-operator --- apiVersion: v1 kind: ServiceAccount metadata: labels: app.kubernetes.io/component: controller app.kubernetes.io/name: prometheus-operator app.kubernetes.io/version: v0.38.3 name: prometheus-operator namespace: monitoring --- apiVersion: v1 kind: Service metadata: labels: app.kubernetes.io/component: controller app.kubernetes.io/name: prometheus-operator app.kubernetes.io/version: v0.38.3 name: prometheus-operator namespace: monitoring spec: clusterIP: None ports: - name: http port: 8080 targetPort: http selector: app.kubernetes.io/component: controller app.kubernetes.io/name: prometheus-operator安裝 Longhorn ServiceMonitor

Longhorn ServiceMonitor 有一個標簽選擇器 app: longhorn-manager 來選擇 Longhorn 后端服務。稍后,Prometheus CRD 可以包含 Longhorn ServiceMonitor,以便 Prometheus server 可以發現所有 Longhorn manager pods 及其端點。

apiVersion: monitoring.coreos.com/v1 kind: ServiceMonitor metadata: name: longhorn-prometheus-servicemonitor namespace: monitoring labels: name: longhorn-prometheus-servicemonitor spec: selector: matchLabels: app: longhorn-manager namespaceSelector: matchNames: - longhorn-system endpoints: - port: manager安裝和配置 Prometheus AlertManager

使用 3 個實例創建一個高可用的 Alertmanager 部署:

apiVersion: monitoring.coreos.com/v1 kind: Alertmanager metadata: name: longhorn namespace: monitoring spec: replicas: 3除非提供有效配置,否則 Alertmanager 實例將無法啟動。有關 Alertmanager 配置的更多說明,請參見此處。下面的代碼給出了一個示例配置:

global: resolve_timeout: 5m route: group_by: [alertname] receiver: email_and_slack receivers: - name: email_and_slack email_configs: - to: <the email address to send notifications to> from: <the sender address> smarthost: <the SMTP host through which emails are sent> # SMTP authentication information. auth_username: <the username> auth_identity: <the identity> auth_password: <the password> headers: subject: 'Longhorn-Alert' text: |- {{ range .Alerts }} *Alert:* {{ .Annotations.summary }} - `{{ .Labels.severity }}` *Description:* {{ .Annotations.description }} *Details:* {{ range .Labels.SortedPairs }} • *{{ .Name }}:* `{{ .Value }}` {{ end }} {{ end }} slack_configs: - api_url: <the Slack webhook URL> channel: <the channel or user to send notifications to> text: |- {{ range .Alerts }} *Alert:* {{ .Annotations.summary }} - `{{ .Labels.severity }}` *Description:* {{ .Annotations.description }} *Details:* {{ range .Labels.SortedPairs }} • *{{ .Name }}:* `{{ .Value }}` {{ end }} {{ end }}將上述 Alertmanager 配置保存在名為 alertmanager.yaml 的文件中,并使用 kubectl 從中創建一個 secret。

Alertmanager 實例要求 secret 資源命名遵循 alertmanager-{ALERTMANAGER_NAME} 格式。在上一步中,Alertmanager 的名稱是 longhorn,所以 secret 名稱必須是 alertmanager-longhorn

$ kubectl create secret generic alertmanager-longhorn --from-file=alertmanager.yaml -n monitoring為了能夠查看 Alertmanager 的 Web UI,請通過 Service 公開它。一個簡單的方法是使用 NodePort 類型的 Service :

apiVersion: v1 kind: Service metadata: name: alertmanager-longhorn namespace: monitoring spec: type: NodePort ports: - name: web nodePort: 30903 port: 9093 protocol: TCP targetPort: web selector: alertmanager: longhorn創建上述服務后,您可以通過節點的 IP 和端口 30903 訪問 Alertmanager 的 web UI。

使用上面的 NodePort 服務進行快速驗證,因為它不通過 TLS 連接進行通信。您可能希望將服務類型更改為 ClusterIP,并設置一個 Ingress-controller 以通過 TLS 連接公開 Alertmanager 的 web UI。

安裝和配置 Prometheus server

創建定義警報條件的 PrometheusRule 自定義資源。

apiVersion: monitoring.coreos.com/v1 kind: PrometheusRule metadata: labels: prometheus: longhorn role: alert-rules name: prometheus-longhorn-rules namespace: monitoring spec: groups: - name: longhorn.rules rules: - alert: LonghornVolumeUsageCritical annotations: description: Longhorn volume {{$labels.volume}} on {{$labels.node}} is at {{$value}}% used for more than 5 minutes. summary: Longhorn volume capacity is over 90% used. expr: 100 * (longhorn_volume_usage_bytes / longhorn_volume_capacity_bytes) > 90 for: 5m labels: issue: Longhorn volume {{$labels.volume}} usage on {{$labels.node}} is critical. severity: critical有關如何定義警報規則的更多信息,請參見https://prometheus.io/docs/prometheus/latest/configuration/alerting_rules/#alerting-rules

如果激活了 RBAC 授權,則為 Prometheus Pod 創建 ClusterRole 和 ClusterRoleBinding:

apiVersion: v1 kind: ServiceAccount metadata: name: prometheus namespace: monitoring apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRole metadata: name: prometheus namespace: monitoring rules: - apiGroups: [""] resources: - nodes - services - endpoints - pods verbs: ["get", "list", "watch"] - apiGroups: [""] resources: - configmaps verbs: ["get"] - nonResourceURLs: ["/metrics"] verbs: ["get"] apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRoleBinding metadata: name: prometheus roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: prometheus subjects: - kind: ServiceAccount name: prometheus namespace: monitoring創建 Prometheus 自定義資源。請注意,我們在 spec 中選擇了 Longhorn 服務監視器(service monitor)和 Longhorn 規則。

apiVersion: monitoring.coreos.com/v1 kind: Prometheus metadata: name: prometheus namespace: monitoring spec: replicas: 2 serviceAccountName: prometheus alerting: alertmanagers: - namespace: monitoring name: alertmanager-longhorn port: web serviceMonitorSelector: matchLabels: name: longhorn-prometheus-servicemonitor ruleSelector: matchLabels: prometheus: longhorn role: alert-rules為了能夠查看 Prometheus 服務器的 web UI,請通過 Service 公開它。一個簡單的方法是使用 NodePort 類型的 Service:

apiVersion: v1 kind: Service metadata: name: prometheus namespace: monitoring spec: type: NodePort ports: - name: web nodePort: 30904 port: 9090 protocol: TCP targetPort: web selector: prometheus: prometheus創建上述服務后,您可以通過節點的 IP 和端口 30904 訪問 Prometheus server 的 web UI。

此時,您應該能夠在 Prometheus server UI 的目標和規則部分看到所有 Longhorn manager targets 以及 Longhorn rules。

使用上述 NodePort service 進行快速驗證,因為它不通過 TLS 連接進行通信。您可能希望將服務類型更改為 ClusterIP,并設置一個 Ingress-controller 以通過 TLS 連接公開 Prometheus server 的 web UI。

安裝 Grafana

創建 Grafana 數據源配置:

apiVersion: v1 kind: ConfigMap metadata: name: grafana-datasources namespace: monitoring data: prometheus.yaml: |- { "apiVersion": 1, "datasources": [ { "access":"proxy", "editable": true, "name": "prometheus", "orgId": 1, "type": "prometheus", "url": "http://prometheus:9090", "version": 1 } ] }創建 Grafana 部署:

apiVersion: apps/v1 kind: Deployment metadata: name: grafana namespace: monitoring labels: app: grafana spec: replicas: 1 selector: matchLabels: app: grafana template: metadata: name: grafana labels: app: grafana spec: containers: - name: grafana image: grafana/grafana:7.1.5 ports: - name: grafana containerPort: 3000 resources: limits: memory: "500Mi" cpu: "300m" requests: memory: "500Mi" cpu: "200m" volumeMounts: - mountPath: /var/lib/grafana name: grafana-storage - mountPath: /etc/grafana/provisioning/datasources name: grafana-datasources readOnly: false volumes: - name: grafana-storage emptyDir: {} - name: grafana-datasources configMap: defaultMode: 420 name: grafana-datasources在 NodePort 32000 上暴露 Grafana:

apiVersion: v1 kind: Service metadata: name: grafana namespace: monitoring spec: selector: app: grafana type: NodePort ports: - port: 3000 targetPort: 3000 nodePort: 32000使用上述 NodePort 服務進行快速驗證,因為它不通過 TLS 連接進行通信。您可能希望將服務類型更改為 ClusterIP,并設置一個 Ingress-controller 以通過 TLS 連接公開 Grafana。

使用端口 32000 上的任何節點 IP 訪問 Grafana 儀表板。默認憑據為:

User: admin Pass: admin安裝 Longhorn dashboard

進入 Grafana 后,導入預置的面板:https://grafana.com/grafana/dashboards/13032

有關如何導入 Grafana dashboard 的說明,請參閱 https://grafana.com/docs/grafana/latest/reference/export_import/

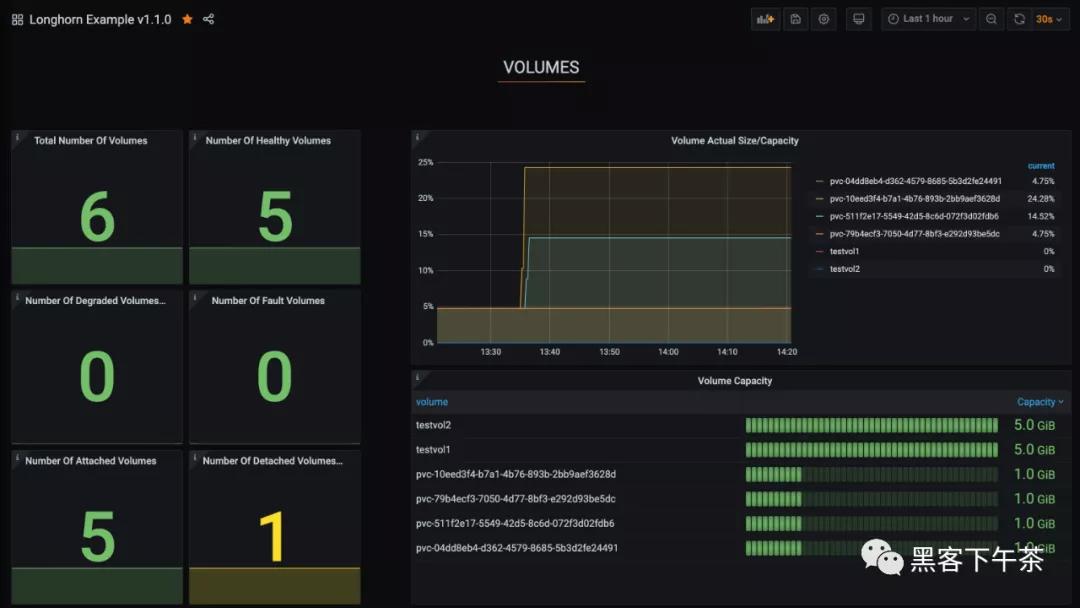

成功后,您應該會看到以下 dashboard:

將 Longhorn 指標集成到 Rancher 監控系統中

關于 Rancher 監控系統

使用 Rancher,您可以通過與的開源監控解決方案 Prometheus 的集成來監控集群節點、Kubernetes 組件和軟件部署的狀態和進程。

有關如何部署/啟用 Rancher 監控系統的說明,請參見https://rancher.com/docs/rancher/v2.x/en/monitoring-alerting/

將 Longhorn 指標添加到 Rancher 監控系統

如果您使用 Rancher 來管理您的 Kubernetes 并且已經啟用 Rancher 監控,您可以通過簡單地部署以下 ServiceMonitor 將 Longhorn 指標添加到 Rancher 監控中:

apiVersion: monitoring.coreos.com/v1 kind: ServiceMonitor metadata: name: longhorn-prometheus-servicemonitor namespace: longhorn-system labels: name: longhorn-prometheus-servicemonitor spec: selector: matchLabels: app: longhorn-manager namespaceSelector: matchNames: - longhorn-system endpoints: - port: manager創建 ServiceMonitor 后,Rancher 將自動發現所有 Longhorn 指標。

然后,您可以設置 Grafana 儀表板以進行可視化。

Longhorn 監控指標

Volume(卷)

指標名 說明 示例 longhorn_volume_actual_size_bytes 對應節點上卷的每個副本使用的實際空間 longhorn_volume_actual_size_bytes{node="worker-2",volume="testvol"} 1.1917312e+08 longhorn_volume_capacity_bytes 此卷的配置大小(以 byte 為單位) longhorn_volume_capacity_bytes{node="worker-2",volume="testvol"} 6.442450944e+09 longhorn_volume_state 本卷狀態:1=creating, 2=attached, 3=Detached, 4=Attaching, 5=Detaching, 6=Deleting longhorn_volume_state{node="worker-2",volume="testvol"} 2 longhorn_volume_robustness 本卷的健壯性: 0=unknown, 1=healthy, 2=degraded, 3=faulted longhorn_volume_robustness{node="worker-2",volume="testvol"} 1Node(節點)

指標名 說明 示例 longhorn_node_status 該節點的狀態:1=true, 0=false longhorn_node_status{condition="ready",condition_reason="",node="worker-2"} 1

網頁名稱:Longhorn,企業級云原生容器分布式存儲之監控

分享網址:http://m.newbst.com/news14/204914.html

成都網站建設公司_創新互聯,為您提供移動網站建設、網站維護、建站公司、外貿建站、關鍵詞優化、網站制作

聲明:本網站發布的內容(圖片、視頻和文字)以用戶投稿、用戶轉載內容為主,如果涉及侵權請盡快告知,我們將會在第一時間刪除。文章觀點不代表本網站立場,如需處理請聯系客服。電話:028-86922220;郵箱:631063699@qq.com。內容未經允許不得轉載,或轉載時需注明來源: 創新互聯

猜你還喜歡下面的內容

- 美國softlayer服務器機房簡介 2022-10-13

- 海外服務器和國內服務器應該用哪個好? 2022-10-13

- 服務器租用配置怎么選?該看哪些參數 2022-10-13

- 高防服務器的CDN有哪些作用呢? 2022-10-13

- ev代碼簽名證書一般有哪些基本的優點呢 2022-10-13

- 數據中心基礎設施如何為5G熱潮做好準備 2022-10-13

- 代碼簽名證書費用多少?代碼簽名證書申請? 2022-10-13

- 如何對網站信息加密 2022-10-13

- 云服務器存在哪些技術風險? 2022-10-13

- WAF和網絡防火墻、網頁防篡改、IPS三者的區別 2022-10-13

- 為什么使用代理服務器?代理服務器設置操作步驟 2022-10-13

- C端算力的“以租代購” 2022-10-13

- 提效降本,您不可不知道的云架構秘訣 2022-10-13

- 在哪里能買到免費的SSL證書 2022-10-13

- https證書配置不成功應該怎么辦? 2022-10-13

- 如何使用aDLL自動識別DLL劫持漏洞 2022-10-13

- 考慮全球云計算部署的10個指南 2022-10-13

- DNS服務器是什么? 2022-10-13

- SSL證書的主要作用是什么不安裝會有什么危害 2022-10-13